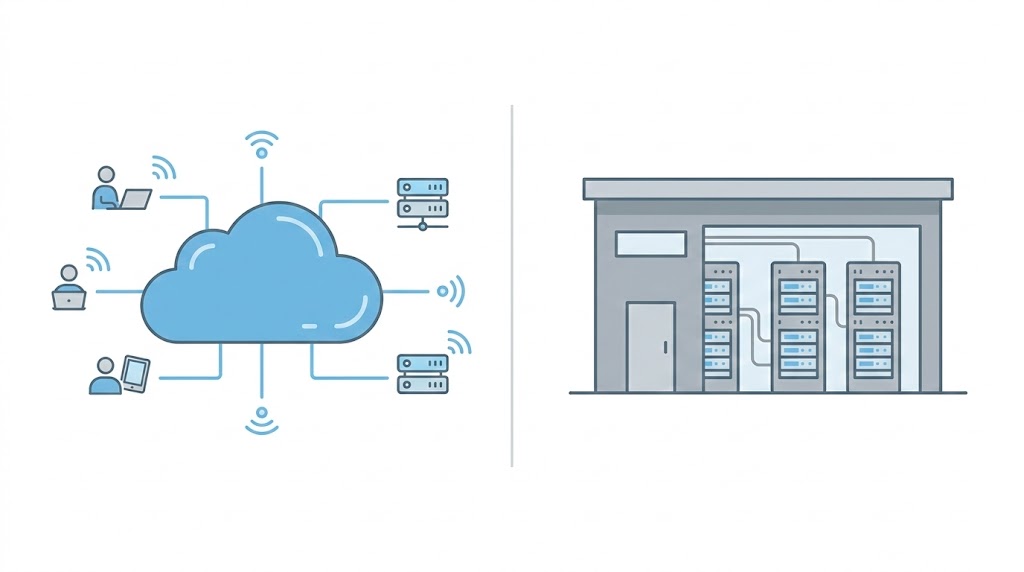

Cloud vs on prem AI is more than a technology choice; it has a direct bearing on cost management, scalability, compliance, and innovation. Both options have their strengths and weaknesses based on workload patterns, data sensitivity, and in-house IT capabilities.

When evaluating cloud vs on prem AI, businesses must consider long-term scalability, infrastructure cost, and compliance requirements. The cloud vs on prem AI decision affects operational efficiency and innovation speed.

Cloud AI provides elastic compute, on-demand GPUs, and managed AI services, making it ideal for rapid experimentation and scaling.

On-Premise AI offers greater control over data, infrastructure, and security—often preferred for regulated industries and sensitive workloads.

Cost structure differs: Cloud operates on OpEx (pay-as-you-go), while on-prem requires upfront CapEx but may lower long-term costs for steady, high-utilization workloads.

Latency matters: On-prem or edge deployments reduce network delays for real-time AI applications (e.g., manufacturing, healthcare imaging).

Compliance and data residency requirements may favor on-prem or hybrid architectures.

Operational complexity varies: Cloud reduces infrastructure management; on-prem requires in-house hardware and maintenance expertise.

Hybrid AI models combine cloud scalability with on-prem control, offering flexibility for enterprises with mixed requirements.

The best deployment model depends on workload predictability, regulatory needs, budget strategy, performance requirements, and internal IT capabilities.

1. Scalability & Elastic Compute Capacity

Businesses can scale AI training and inference workloads without having to buy hardware up front thanks to on-demand access to GPU and accelerator infrastructure offered by Amazon Web Services, Microsoft Azure, and Google Cloud.

One of the main advantages listed by cloud providers is elastic infrastructure, which enables companies to scale resources up or down in response to demand.

Source: Overview of AWS Cloud AWS Documentation Whitepaper.

Why it matters: Cloud elasticity prevents hardware underutilization and shortens time-to-market for AI workloads that are experimental, seasonal, or unpredictable (such as spikes in model training).

2. Latency & Real-Time Processing Requirements

Inference latency can have a direct impact on results in robotics, manufacturing, and medical imaging. Network round-trip delays can be avoided by running AI workloads on-premises or at the edge.

Industry publications emphasize the advantages of edge AI in manufacturing settings where control in real time is crucial.

Why it matters: On-premises or edge deployment might be required if milliseconds affect user experience, production quality, or safety.

3. Data Sovereignty & Regulatory Compliance

Regulations pertaining to the transmission and storage of data must be followed by the government and healthcare institutions.

Regarding HIPAA and cloud computing obligations for managing protected health information (PHI), the U.S. Department of Health & Human Services (HHS) offers guidance.

HHS HIPAA Cloud Computing Guidance is the source.

Why it matters: On-premise or hybrid architectures may simplify compliance if regulations call for stringent data residency, auditability, or physical control.

4. Total Cost of Ownership (TCO) Over Time

The cloud converts capital expenditures (CapEx) into operating expenses (OpEx). On-premises investments, however, might be preferred for long-term GPU-intensive workloads.

Cost tradeoffs between cloud and on-premise infrastructure over several years are covered in a Lenovo TCO analysis comparing generative AI deployments.

Source: Generative AI TCO Analysis, Lenovo Press.

Why it matters: Having infrastructure may lower long-term costs per training cycle for consistent, high-utilization workloads.

5. Dedicated Infrastructure & Hardware Performance

High-density GPU platforms, like the NVIDIA DGX systems, which are made especially for enterprise AI workloads, are frequently used by organizations constructing internal AI clusters.

DGX platforms are described by NVIDIA as enterprise AI infrastructure for reliable, high-performance computing.

NVIDIA DGX Platform Documentation is the source.

Why it matters: For long-term AI training pipelines, on-premise clusters provide consistent throughput and networking performance.

6. Operational Responsibility & Talent Requirements

Cloud providers oversee availability, infrastructure, and a variety of AI services, including managed training, managed inference, and MLOps platforms. As a result, there is less need for internal data center management.

Managed services are highlighted as a major cloud benefit in vendor documentation.

AWS Overview Whitepaper is the source.

Why it matters: Cloud computing speeds up deployment and lessens operational burden if your company lacks specialized infrastructure engineers.

7. Hybrid Flexibility & Future-Proofing

Many enterprises adopt hybrid models to combine the benefits of cloud scalability and on-prem control.

Microsoft offers Azure Arc for hybrid resource management, while Google provides Anthos for multi-cloud and on-prem application consistency.

Google Cloud Hybrid & Multi-Cloud Application Platform Documentation

Why it matters: Hybrid allows businesses to keep sensitive data on-prem while using cloud resources for training, analytics, or burst capacity.

Real-World Use Cases: How AI Adds Business Value

Manufacturing

Edge AI enables predictive maintenance and anomaly detection to minimize downtime.

Source: Siemens Industrial Edge documentation.

Healthcare

AI inference in hospital networks supports diagnostic imaging while ensuring regulatory compliance.

Source: HHS HIPAA cloud guidance.

Retail & eCommerce

Cloud AI enables personalization, demand forecasting, and recommendation engines at scale.

Source: Google Cloud Partner & Retail Solutions documentation.

Final Decision Framework

| Priority | Recommended Model |

| Rapid scaling & experimentation | Cloud |

| Strict compliance & data residency | On-Prem or Hybrid |

| Millisecond inference latency | On-Prem / Edge |

| Steady, high GPU utilization | On-Prem (TCO dependent) |

| Limited infrastructure staff | Cloud |

| Mixed regulatory + scale needs | Hybrid |

This table simplifies the cloud vs on prem AI decision framework for enterprise evaluation.

Conclusion

There is no universal answer in the cloud vs on prem AI debate, as each deployment model serves different business priorities.

It all depends on your workload intensity, regulatory needs, cost structure, and in-house expertise. Many organizations find a hybrid approach strikes the right balance between scalability and control.

The key is to choose the best AI deployment strategy for your long-term business goals, not just your short-term requirements.

Thorough analysis upfront can save you from costly migrations and infrastructure overhauls down the road.

A well-considered AI deployment strategy helps ensure your technology infrastructure grows with your business ambitions.

Disclaimer

The information provided in this article is for general informational purposes only. While every effort has been made to ensure accuracy, technology costs, vendor offerings, and compliance requirements may change over time. Businesses should conduct their own due diligence or consult qualified professionals before making infrastructure or investment decisions.